You’re not as far behind as you think

Most people I know feel like they’re behind the curve. Amid all the hype and noise around AI, it’s easy to get the impression that you’re missing out, that everyone else knows more than you and that if you don’t catch up now, doing so later will be impossible.

In a world where media is designed to make us anxious, AI is a great topic to leverage.

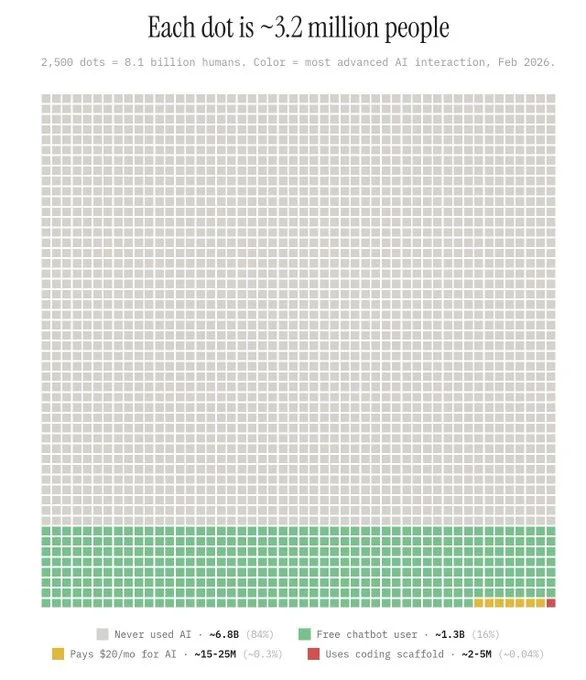

I’ve seen the graphic above a few times recently, and it got me thinking - where are we, really? You may have seen it too. If not, a brief explanation:

Each square on the grid represents 3.2 million people, for a total of 8.1 billion, colour-coded based on their most advanced AI interaction as of February 2026. The one red square in the bottom right corner is the 2-5m people who’ve used AI for coding - this is around 0.04% of the world’s population. The next group of yellow squares is the 15-25m people (roughly 0.3% of us) who pay $20 a month or more for AI. Several rows of green squares are the 1.3 billion people who’ve used free chatbots, and the remaining grey squares are those who’ve never used AI - all 6.8 billion of them.

84% of the world’s population have never used AI, and less than half a percent actually pay for AI tools. This is significantly different from the perception the media have created.

That’s not to say that AI isn’t an accelerating trend. Daily AI users nearly tripled from 116m in 2020 to 314m in 2024, and in the workplace users rose from 12% in mid-2024 to 26% by late 2025. The pace of adoption is real, but the scale of adoption is still small.

The headline numbers can hide a lot. OpenAI reports 800m weekly active users, but only 5% of these users have paid accounts and the company never report daily active users. This mirrors the early days of social media, when industry commentators and investors discovered that monthly and weekly active users were essentially meaningless - daily active users was the key metric. AI chatbots typically see 10-20% daily use from their users, meaning that although a growing number of people know about the tools and know how to use them, very few find a daily use for them.

In B2B use, according to IBM only 26% of executives believe their AI programmes delivered the ROI they originally expected. 70-85% of AI projects still fail, and 77% of businesses worry about hallucinations and the ensuing issues they cause. One of the key factors in these failed projects has been underestimating the scale and difficulty of change management required.

From a capability perspective, huge strides have been made, with AI capabilities doubling roughly every eight months over the last three years (based on research by the UK AI Security Institute), and AI systems are now highly capable in areas like coding, mathematics, and complex multi-step tasks. Applied to narrow domains, AI tools have been shown to unlock advances not previously thought possible.

Our fear (or expectation) of impending AGI or superintelligence, however, may be misplaced. In late 2025, the organisers of the ARC Prize - one of the most respected tests of AI reasoning - changed their test criteria as they realised developers were gaming the system rather than producing genuine advances. The latest version, ARC-AGI-3, introduced an interactive element that made tasks fundamentally harder for AI, while humans are able to solve it almost every time. Even when they can solve the problems, AI systems expend orders of magnitude more energy than humans do to achieve the same goal. AI still struggles to apply its immense power in narrow tasks to general reasoning and intelligence.

There are fundamental technical problems to solve before this will change. The first is continual learning. LLM models are frozen in their knowledge at the time of training, and don't have the memory capabilities to continue learning - doing so causes their initial training to degrade. Alongside this, models lack a true understanding of the world. They are able to infer and derive meaning from language, but lack the real experience to develop actual, physical knowledge or to make new discoveries beyond incremental improvements. It also looks increasingly likely that transformer-based LLMs have architectural limits to their capability that will prevent them from developing genuine intelligence. Other approaches are being researched, but remain very early and unproven.

The theory used to be that intelligence was just a matter of scale, but this is now thought to be untrue. True intelligence requires several major technical breakthroughs - whether these problems will take 5 years, 50 or 200 to solve is unclear.

I don’t want to minimise the importance of AI. As a leader, you should absolutely learn about it and look for ways to improve your business using new technology. But if you are thinking about this topic and using the tools, you’re way ahead of most of the world. AI is no doubt transformative, but the hype often outweighs the reality. You really aren’t as far behind as you think you are….

(P.S. If you know someone who needs to read this today, send it to them and encourage them to subscribe to the Versapiens blog. If you haven’t subscribed yet, come join us on our journey through the intersection between culture, technology and business.)